How Consistent Hashing Solves Scalability Issues

Consistent hashing is a method that makes scaling distributed systems much smoother and more reliable. Unlike older hashing techniques that break down when servers are added or removed, consistent hashing reduces disruptions by redistributing only a small portion of data. This approach ensures:

- Minimal Data Movement: When a server is added or removed, only about 1/n of the keys are reassigned, avoiding system-wide disruptions.

- Better Load Distribution: Virtual nodes spread the workload evenly across servers, preventing hotspots and ensuring efficient use of resources.

- Improved Fault Tolerance: If a server fails, only its immediate neighbors take on the extra load, keeping the system stable.

- Cache Stability: Most cached data remains intact during scaling, reducing database pressure and maintaining performance.

Consistent hashing is widely used in modern systems like Amazon DynamoDB, Netflix’s CDN, and Discord to handle unpredictable traffic spikes and ensure reliable performance. By mapping servers and data onto a circular hash ring, it optimizes scalability and reliability in distributed architectures.

Consistent Hashing in Distributed Systems | Easy Explanation + Demo

sbb-itb-59e1987

How Consistent Hashing Works

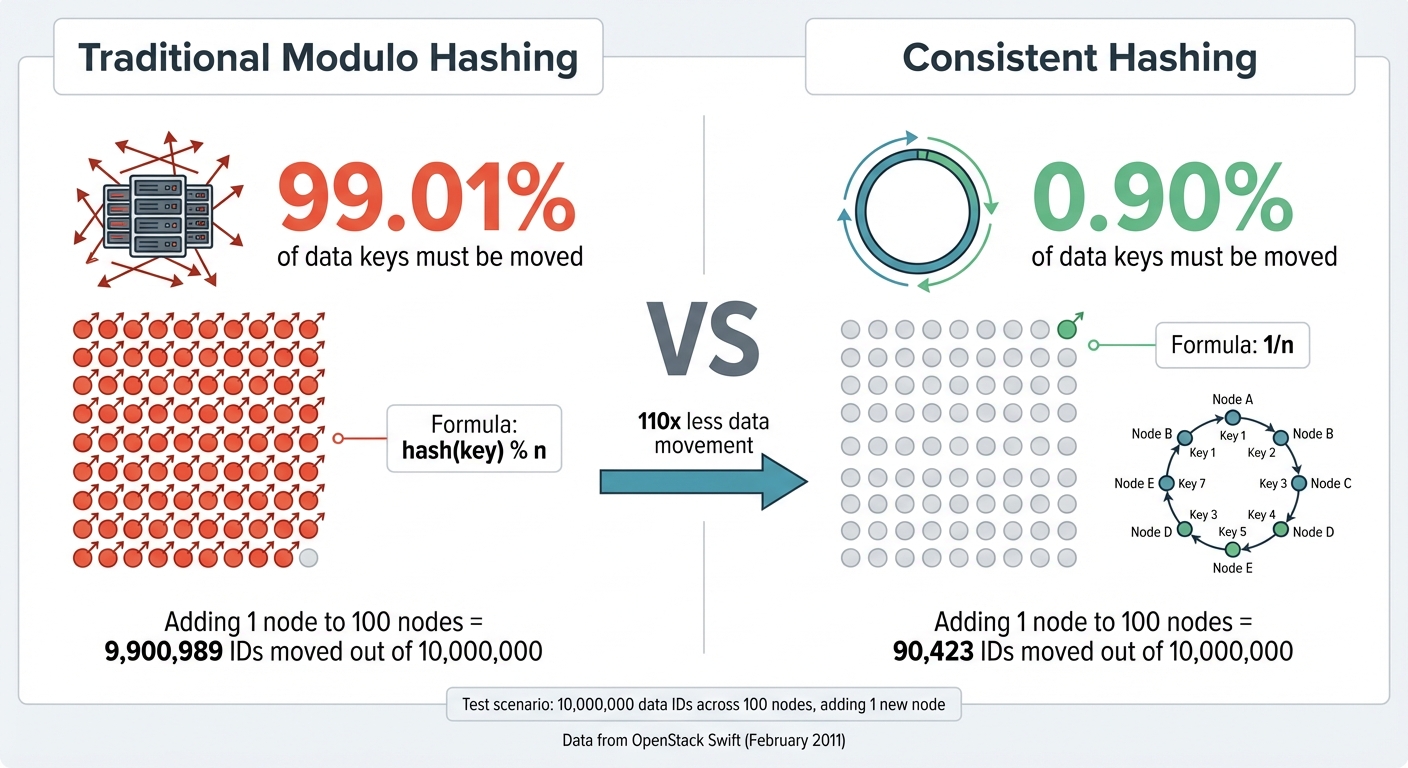

Consistent Hashing vs Traditional Hashing: Data Movement Comparison

The Hash Ring and Key Assignment

Consistent hashing uses a circular hash space, often called a hash ring, to replace the straightforward modulo approach. This ring represents hash values ranging from 0 to 2^32-1. Both servers and data keys are hashed with the same function and positioned on the ring.

When a key is requested, the system hashes the key to a specific location on the ring. From there, it moves clockwise until it reaches the first server marker, which is then responsible for storing and managing that key. This clockwise rule determines which server handles which portion of the hash space.

Unlike traditional hashing, consistent hashing doesn’t tie the system to the total number of servers. Each server occupies a specific point on the ring and owns the segment between itself and the previous server in a counter-clockwise direction.

Adding and Removing Nodes

When a new server is added, it’s hashed to a position on the ring and takes over keys from its next clockwise neighbor. Importantly, the rest of the system remains unchanged. For example, in a setup with 100 nodes, adding one more node would require only 0.90% of the data keys to move. In contrast, traditional hashing would necessitate relocating 99.01% of the data.

The process is similar when removing a server. If a server goes offline or fails, its keys are shifted to the next server clockwise. This targeted redistribution minimizes disruption, avoiding the widespread data movement and cache misses that can occur with traditional methods. By ensuring only a small fraction of keys are redistributed, consistent hashing supports scalable and reliable hosting systems.

With an efficient lookup time complexity of O(log N) when using a binary search tree to store node positions, consistent hashing ensures smooth performance even as the system grows. This streamlined data movement also lays the groundwork for optimizing load distribution through virtual nodes.

Using Virtual Nodes for Better Load Distribution

To improve load balancing, virtual nodes (VNodes) come into play. If a physical server appears at only one position on the ring, it can lead to uneven load distribution. Virtual nodes address this by assigning multiple positions on the ring to each physical server.

This strategy spreads the workload more evenly. When a server fails, its tasks are shared across several servers instead of burdening just one neighbor. Virtual nodes also allow for capacity-based weighting, meaning servers with greater resources (like more CPU or RAM) can handle a larger share of requests by being assigned more virtual nodes.

Typically, systems assign around 100 virtual nodes per server, offering fine-tuned control over load balancing. Even in large-scale deployments, the memory required is minimal. For instance, a hash ring supporting 60,000 physical servers with 6 million virtual nodes would only need about 12 to 27 megabytes of memory to store the mapping. This combination of efficiency and flexibility makes virtual nodes a vital tool for consistent hashing systems.

How Consistent Hashing Solves Scalability Problems

Less Data Movement When Scaling

One of the standout benefits of consistent hashing is how it minimizes data movement when scaling up or down. In traditional modulo hashing, even a small adjustment – like adding a single server to a large cluster – can require nearly all keys to be reassigned. Consistent hashing, on the other hand, only redistributes about 1/n of the keys when a new server is introduced. This drastically reduces the amount of data shuffling across the network. For example, in a test with 1,500 items spread across 80 machines (some of which experienced changes), consistent hashing caused only a 25% increase in remapped pairs, while traditional hashing would have required almost all keys to be moved. This efficiency is crucial in preventing network congestion and service interruptions, especially in environments where moving large amounts of data can be disruptive. By limiting data movement, consistent hashing ensures a more stable system, even during node failures.

Better Performance and Reliability

Consistent hashing also improves performance and reliability by containing the impact of node failures. In traditional modulo-based systems, the failure of a single node can require rehashing up to 90% of the keys, resulting in a flood of re-computation requests to origin servers. With consistent hashing, disruptions are localized – only the neighboring nodes on the hash ring take on the additional load. Early implementations found that the slight extra overhead from traversing the hash ring was negligible compared to the time spent on network transmissions.

A notable application of consistent hashing comes from Akamai Technologies, which used it in its Content Delivery Network to distribute traffic across rotating web servers. This approach helped solve the "slashdotting" problem of the 1990s, where sudden traffic surges would crash servers. Tim Berners-Lee even credited this solution with addressing these traffic spikes effectively.

Maintaining Cache Efficiency

Efficient caching is critical for both performance and cost management, and consistent hashing plays a key role in maintaining cache integrity. By limiting data reassignment to a small fraction of keys, consistent hashing helps preserve "warm" caches, which store frequently accessed data. This is essential because cache misses can lead to costly database queries and increased pressure on backend systems. By keeping most cached data intact during scaling events, consistent hashing minimizes the risk of widespread cache invalidation.

"By minimizing cache invalidation, consistent hashing enhances the user experience through faster load times and reduces bandwidth costs." – Naeem Ul Haq, System Design Expert

A real-world example of this can be seen in Discord’s scaling efforts in July 2017. To support 5,000,000 concurrent users, Discord leveraged consistent hashing within its Elixir-based architecture. This allowed specific chat rooms to be mapped to the right host nodes efficiently, ensuring smooth scaling and reliable performance. Beyond preserving cache efficiency, consistent hashing also helps distribute workloads effectively, even when server capabilities vary.

Working with Different Server Capacities

In environments with diverse server hardware, consistent hashing uses virtual nodes to balance the load based on each virtual private server’s capacity. For instance, a server with twice the capacity of another can be assigned twice as many virtual nodes, enabling it to handle a proportionally larger share of the workload. By assigning virtual nodes accordingly – e.g., 100 nodes for standard servers and 200 for high-capacity ones – the system achieves balanced load distribution with minimal fluctuations. This approach ensures that more powerful servers are fully utilized, while less capable ones handle workloads that match their capacity. The result is a well-balanced and efficient hosting setup that adapts seamlessly to varying hardware capabilities.

Implementation Considerations for Consistent Hashing

Now that we’ve covered the advantages, let’s dive into the practical details of implementing consistent hashing effectively.

Selecting a Hash Function

The hash function you choose plays a critical role in performance and key distribution. For most hosting environments, non-cryptographic hash functions like MurmurHash, xxHash, or MetroHash are ideal because they’re fast and don’t burden the CPU with unnecessary security overhead. Cryptographic hash functions (e.g., MD5, SHA-1) are overkill for this purpose and can slow down your system.

"An optimal hash function for consistent hashing must be fast and produce uniform output." – Neo Kim

A good hash function ensures that keys are evenly distributed across the hash space, avoiding hotspots where a single node gets overloaded. A 32-bit hash function offers about 4.29 billion possible positions on the virtual ring, which is plenty of space to reduce collisions. To maintain consistency, all clients and nodes must use the same hash function, ensuring they agree on how keys map to nodes. Additionally, using hash outputs that are powers of two enables faster bitwise operations, which are more efficient than modulo calculations.

Managing Node Changes

Handling changes in the cluster – like nodes joining or leaving – is another critical aspect of consistent hashing. The hash ring must adjust dynamically without disrupting services. Using a self-balancing binary search tree (BST) to store node positions ensures that lookup operations remain efficient, with a complexity of O(log N), even as the ring evolves. This structure makes it easy to quickly locate the "next node clockwise" for any given key.

To manage updates safely, use readers-writer locks to synchronize changes to the BST when nodes are added or removed. A gossip protocol can also help by enabling nodes to exchange state information periodically in a peer-to-peer manner. This avoids the need for a central controller, which could become a bottleneck. To prevent overloading a single neighbor when a node fails, randomize the initial partition assignments so the load spreads evenly across the cluster. Once these mechanisms are in place, continuous monitoring will help maintain balance.

Monitoring and Tuning Load Distribution

Even with a well-designed hash ring, keeping an eye on load distribution is essential to prevent runtime imbalances. Regularly track the number of keys each node owns to catch potential issues early. Pay close attention to the number of virtual nodes assigned to each physical node – assigning around 100 virtual nodes per physical node is a good starting point for detecting and resolving imbalances.

"A good rule to follow might be to calculate 100 virtual nodes to each real node at maximum capacity. This would allow you to alter the load on any given node by 1%." – Greg Holt

For systems with mixed hardware capabilities, you can assign more virtual nodes to servers with greater CPU or memory resources, ensuring they handle a proportionally larger share of the workload. To prevent any single node from being overwhelmed, implement bounded loads – if a node exceeds its capacity, redirect incoming requests to a fallback node.

A real-world example of this principle in action is OpenStack Swift. In February 2011, they demonstrated that with 100 nodes and 10,000,000 data IDs, adding a single node with consistent hashing and 1,000 virtual nodes resulted in only 90,423 IDs (0.90%) being moved. By contrast, traditional modulus hashing required moving 9,900,989 IDs (99.01%). This illustrates how consistent hashing can make scaling much more efficient while minimizing disruptions.

Conclusion

The Key Advantages of Consistent Hashing

Consistent hashing is a game-changer for distributed systems, offering a way to scale efficiently by relocating only a fraction (1/n) of keys when servers are added or removed. Unlike traditional modulo hashing, this method keeps most of the keys stable, ensuring high cache hit rates and preventing servers from being overwhelmed.

Another standout feature is its fault tolerance. If a node goes down, only the keys assigned to that node are redistributed to the next one in the hash ring, leaving the rest of the system unaffected. Virtual nodes further improve this process by spreading data more evenly across servers and allowing stronger servers to handle more traffic. Together, these features create a framework for resilient and high-performing infrastructures.

"Consistent hashing makes the distribution of the keys independent of the number of servers used by the system. Thus, we can scale up or down without impacting the overall system." – Animesh Gaitonde, Tech Lead at Amazon

Real-world examples highlight these benefits. For instance, Amazon’s DynamoDB relies on consistent hashing to manage massive traffic spikes, such as those on Black Friday, without any hiccups. Similarly, Netflix uses it in its Open Connect CDN to effectively map content to edge servers across the globe.

Consistent Hashing in Modern Hosting

Thanks to its efficiency and reliability, consistent hashing has become a cornerstone of modern hosting solutions. Hosting providers use this method to scale effortlessly and balance traffic across global data centers. The ability to add or remove capacity without causing widespread data redistribution ensures steady performance and reliability.

This technique fits perfectly into today’s hosting architectures, which must handle dynamic workloads and operate across multiple regions. With lookup times as low as 20 microseconds and the ability to maintain cache effectiveness during infrastructure changes, consistent hashing empowers hosting solutions to deliver stable services as systems evolve. At Serverion, we’ve adopted consistent hashing principles to provide flexible and high-performing hosting across our distributed data centers.

FAQs

How does consistent hashing help reduce data movement when scaling distributed systems?

Consistent hashing operates by arranging nodes and data in a circular hash ring. When a node joins or leaves the system, only the data linked to that specific node and its nearest neighbor gets reassigned. This method significantly reduces the amount of data that needs to be moved, affecting only a small fraction of the overall dataset.

This design minimizes disruptions during scaling, enabling a smoother and more efficient process. It’s particularly well-suited for distributed systems that manage constantly changing workloads.

How do virtual nodes help distribute load in consistent hashing?

Virtual nodes, or vnodes, play a vital role in consistent hashing, helping to distribute loads more evenly in distributed systems. Instead of linking each server to just one spot on the hash ring, servers are assigned multiple virtual positions. This splits the key space into smaller, easier-to-handle sections, ensuring traffic and storage are spread more evenly across all servers.

Here’s how it works: when a key is hashed, it’s assigned to the nearest vnode moving clockwise on the hash ring. With multiple vnodes per server, the system avoids overwhelming any single server, maintaining a balanced load. Adding or removing a server only affects the keys tied to its vnodes, reducing the amount of data that needs to be moved. This design supports smooth scaling and ensures reliable performance – something critical for infrastructures like Serverion’s hosting platform, where efficient resource management is essential for delivering consistent results.

How does consistent hashing enhance fault tolerance in distributed systems?

Consistent hashing strengthens fault tolerance by distributing data across nodes in a way that minimizes disruption when a node goes offline. It works through a circular hash ring that maps both data and servers. When a node fails, only the data linked to that specific node is reassigned to its closest neighbor on the ring. This approach significantly reduces data movement while keeping the rest of the system running smoothly.

This method not only ensures high availability but also supports scalability. Adding or removing nodes causes minimal disturbance to the system. By effectively managing node failures, consistent hashing becomes a cornerstone for creating reliable distributed systems.