5 AI Strategies for Energy-Efficient Data Centers

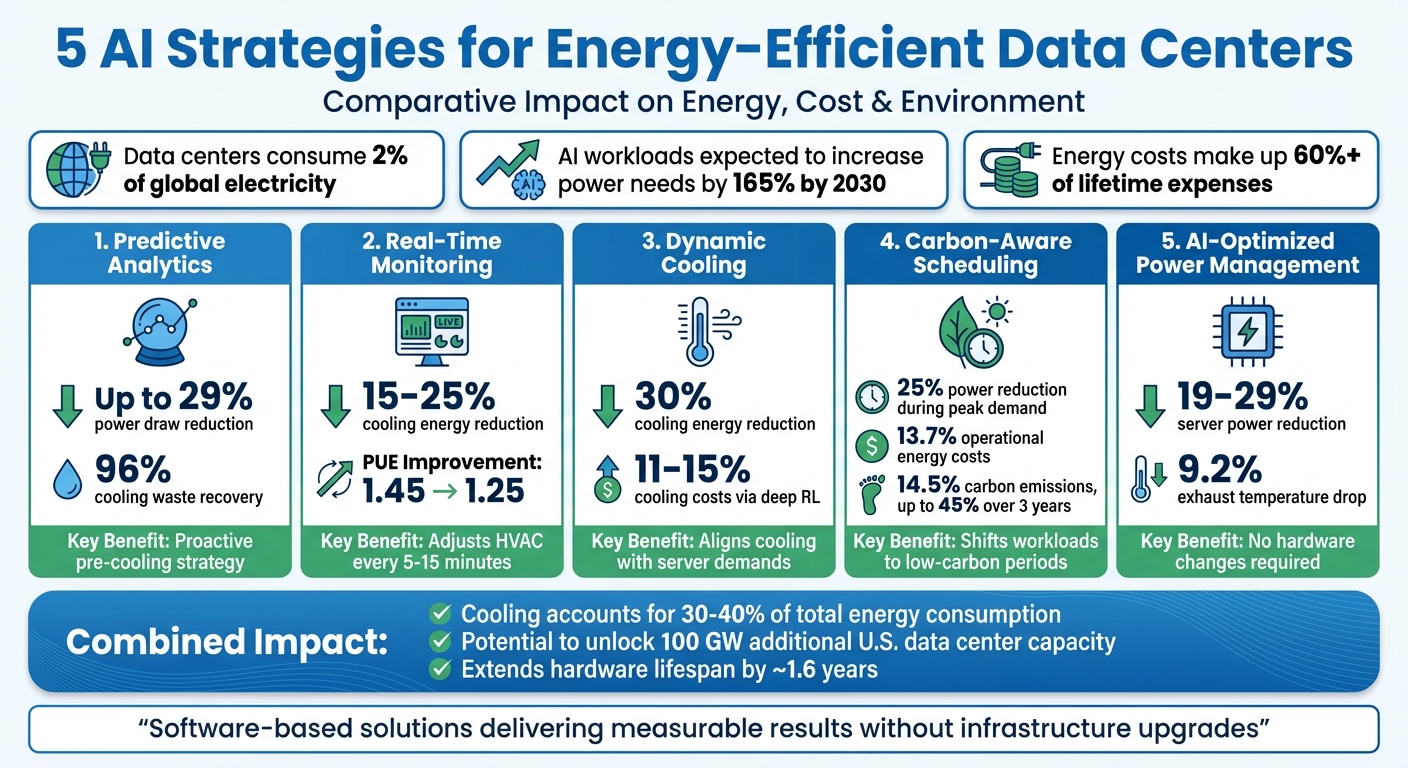

Data centers consume 2% of global electricity and face rising energy demands due to AI workloads, which are expected to increase power needs by 165% by 2030. With energy costs making up 60% or more of lifetime expenses, improving efficiency is crucial. Here are five AI strategies to cut energy use, reduce costs, and address environmental concerns:

- Predictive Analytics: AI forecasts workload spikes to optimize cooling in advance, saving up to 29% on power draw and reducing cooling energy waste by 96% in trials.

- Real-Time Monitoring: AI systems adjust HVAC settings every few minutes, cutting cooling energy by 15–25% and lowering maintenance costs by detecting issues early.

- Dynamic Cooling: Adaptive systems align cooling with server demands, reducing energy use by 30% and improving hardware lifespan.

- Carbon-Aware Scheduling: AI shifts workloads to times of lower grid carbon intensity, reducing emissions and saving 13.7% in energy costs.

- AI-Optimized Power Management: Machine learning fine-tunes server power usage, achieving reductions of 19–29% without hardware changes.

These strategies not only lower energy consumption but also help data centers meet ESG goals and avoid costly infrastructure upgrades. AI-driven methods are transforming data centers into efficient, grid-responsive facilities.

5 AI Strategies for Data Center Energy Efficiency: Impact Comparison

AI & Energy Demands: Unlocking Data Center Efficiency

1. Predictive Analytics for Workload Management

Predictive analytics leverages machine learning models, such as Long Short-Term Memory (LSTM) and Reinforcement Learning, to predict workload spikes. By analyzing real-time IoT sensor data – like IT load, temperature, and humidity – AI systems can forecast cooling demands and adjust airflow ahead of time. This proactive "pre-cooling" strategy avoids the high energy consumption associated with traditional reactive systems. It’s a key step in advancing AI-driven energy management in data centers.

Energy Efficiency Improvements

In April 2023, World Wide Technology (WWT) conducted a trial of QiO Technologies’ "Foresight Optima DC+" AI software on Dell R650 and R750 servers at its Advanced Technology Center. The results were impressive: power draw decreased by 19–23% for flat loads and 27–29% for varying loads. Additionally, exhaust temperatures dropped by 9.2% when the software was active. Speaking on these results, Gary Chandler, CTO of QiO Technologies, explained:

"As server usage has historically been managed conservatively to guarantee uptime and Service Level Agreements (SLAs), sleep states have not been utilized effectively. Exploiting this fact with a data-driven optimization approach allows for significant energy consumption savings to be achieved without impacting QoS."

These improvements not only reduce energy usage but also pave the way for meaningful operational cost savings.

Cost Reduction Potential

Decreased power usage leads to a ripple effect of cost savings. Less power drawn by servers means less heat generated, which in turn reduces the workload on cooling systems. Considering that cooling accounts for 30–40% of total energy consumption in data centers, even small reductions in server power usage can translate into major savings. For example, in January 2026, researchers analyzing a year’s worth of operational data from the Frontier exascale supercomputer found 85 MWh of annual cooling energy waste. By using a physics-guided machine learning framework, they showed that 96% of this waste could be recovered through minor and safe adjustments to coolant flow and temperature setpoints.

Environmental Impact Reduction

Beyond cost savings, reducing power consumption has clear environmental benefits. Predictive analytics also enables data centers to act as flexible grid assets. In May 2025, Emerald AI partnered with Oracle Cloud Infrastructure and NVIDIA for a field trial in Phoenix, Arizona. Using the "Emerald Conductor" software on a 256-GPU cluster, they achieved a 25% reduction in power usage during a three-hour peak grid event for utilities Arizona Public Service (APS) and Salt River Project (SRP). This was accomplished without hardware changes and while maintaining Quality of Service guarantees. By reducing power usage by 25% for just 200 hours a year, this approach could unlock up to 100 GW of additional data center capacity in the U.S., eliminating the need for large-scale investments in new generation or transmission infrastructure.

2. Real-Time Monitoring and Automation

Real-time monitoring transforms traditional, rule-based HVAC controls by introducing AI-driven systems that respond instantly to changing workloads and environmental conditions. Using dense IoT sensor networks, these systems adjust temperature, humidity, and IT load settings every 5–15 minutes. This closed-loop setup directly controls HVAC components like fan speeds, chilled water valves, and airflow patterns, ensuring optimal performance based on real-time demand.

Energy Efficiency Improvements

Switching from static controls to AI-powered automation has shown clear energy savings. For example, Google’s AI system achieved a 40% reduction in cooling energy use, lowering its PUE from 1.45 to 1.25 – bringing it closer to the ideal PUE of 1.0, where nearly all energy is used for computing.

AI-based predictive HVAC systems typically cut cooling energy use by 15–25% compared to traditional methods. Advanced AI models have gone even further, reducing fan energy consumption by up to 55.7% by identifying site-specific optimizations that would otherwise go unnoticed.

Cost Reduction Potential

With cooling and air handling accounting for around 38–40% of a data center’s energy use, even small efficiency gains can lead to substantial cost savings. AI automation fine-tunes fan speeds and maintains stable temperatures, reducing mechanical wear and extending equipment life. Additionally, by detecting issues like failing fans or blocked filters early, these systems help prevent costly emergency repairs and downtime.

To ease adoption, operators can initially use AI systems in a "recommendation mode" to build confidence before transitioning to fully autonomous control. This phased approach not only simplifies implementation but also boosts labor efficiency, which becomes increasingly important as facilities scale up.

Scalability for Large Data Centers

Real-time monitoring and automation are highly scalable, making them suitable for facilities of all sizes. Research on exascale supercomputers has shown that physics-guided machine learning frameworks can uncover and correct significant cooling inefficiencies through automated adjustments, all while maintaining safe operational limits.

Environmental Impact Reduction

Beyond cost savings, real-time automation allows data centers to serve as active participants in grid management. By using software-driven power management, these systems can reduce energy consumption during peak demand periods without requiring hardware upgrades. This not only enhances grid stability but also supports broader energy efficiency goals, making data centers more sustainable and responsive to grid needs.

3. Dynamic Cooling Systems

Dynamic cooling takes temperature management to the next level by replacing fixed setpoints with adaptive systems that respond in real time to server workloads and environmental changes. Instead of relying on static rules like traditional HVAC systems, these AI-powered systems use predictive models – such as Reinforcement Learning combined with Long Short-Term Memory networks – to anticipate IT loads and ambient temperature shifts. This allows cooling adjustments to be made proactively, reducing unnecessary energy use and keeping cooling aligned with fluctuating demands.

Energy Efficiency Improvements

Dynamic cooling thrives on predictive analytics to fine-tune thermal conditions on the fly. AI algorithms adjust fan speeds and damper positions based on real-time heat maps, ensuring even temperature distribution while slashing energy use. For example, airflow optimization through AI can cut cooling energy consumption by 30%. Additionally, deep reinforcement learning methods have demonstrated cooling cost reductions of 11–15%, all while adhering to strict thermal requirements.

Cost Reduction Potential

Cooling typically accounts for 30–40% of a data center’s energy use, so even small efficiency gains can lead to major cost savings. AI-based predictive control can lower cooling energy consumption by 15–25% compared to traditional systems, improving Power Usage Effectiveness (PUE) and maintaining safe operating conditions for equipment.

"AI-based approach can reduce cooling energy use by approximately 15–25% relative to conventional controls, thereby improving the facility’s Power Usage Effectiveness (PUE) and maintaining safe thermal conditions for IT equipment." – Mamtakumari Chauhan, Jones Lang LaSalle Inc.

AI automation not only optimizes fan speeds but also enhances equipment longevity by maintaining steady temperatures and reducing mechanical wear. By identifying issues early – like failing fans or blocked filters – these systems can prevent expensive repairs and minimize downtime.

Scalability for Large Data Centers

Dynamic cooling systems are highly scalable, making them a practical solution for facilities of any size. Using hierarchical control frameworks, these systems coordinate resources across different levels, from cluster workload management to rack-specific cooling adjustments. A notable example comes from January 2026, when researchers Nardos Belay Abera and Yize Chen developed a hierarchical control framework with real Microsoft Azure inference traces. This system synchronized GPU performance with cooling resources like airflow and supply air temperature, achieving 31.2% cooling-energy savings and 24.2% computing-energy savings – all while meeting latency requirements. Large data centers, with their significant thermal mass, benefit from this approach, as AI controllers can operate effectively with control intervals of 5–15 minutes without needing ultra-fast processing.

Environmental Impact Reduction

Dynamic cooling systems also contribute to sustainability efforts. By aligning cooling demands with renewable energy availability, they help reduce carbon footprints during peak energy use. Advanced physics-guided machine learning models can predict power usage effectiveness with remarkable accuracy, within 0.01 of actual values for 98.7% of samples, ensuring precise environmental monitoring. These systems are especially effective in high-density computing environments, where liquid-cooling techniques optimize flow rates and temperatures for racks exceeding 80 kW. This ensures that data centers can handle the growing demands of AI workloads without overburdening energy resources.

sbb-itb-59e1987

4. Carbon-Aware AI Scheduling

Carbon-aware AI scheduling transforms data centers into dynamic grid assets, adjusting flexible AI tasks based on real-time carbon intensity. This method prioritizes running workloads like model training or batch processing during times when renewable energy is more prevalent on the grid. Techniques such as GPU frequency scaling and workload deferral enable these systems to align operations with grid conditions.

Energy Efficiency Improvements

By classifying tasks into different flexibility tiers, where critical jobs run at full capacity and batch training tolerates a 25–50% slowdown, an Emerald AI-led trial in May 2025 demonstrated impressive results. The trial achieved a 25% reduction in power usage during peak grid demand without compromising service quality. Conducted in Phoenix, Arizona, it involved a collaboration between Emerald AI, Oracle Cloud Infrastructure, NVIDIA, and Salt River Project. The "Emerald Conductor" platform was tested on a 256-GPU cluster.

"By orchestrating AI workloads based on real-time grid signals without hardware modifications or energy storage, this platform reimagines data centers as grid-interactive assets that enhance grid reliability, advance affordability, and accelerate AI’s development." – Philip Colangelo et al., Emerald AI

This approach, combined with workload prediction and dynamic cooling, represents a key strategy in optimizing energy use within data centers.

Cost Reduction Potential

In addition to energy savings, carbon-aware scheduling delivers clear cost benefits. Multi-agent reinforcement learning controllers have reduced operational energy costs by 13.7% while cutting carbon emissions by 14.5%. Unlike hardware upgrades or battery installations, software-based orchestration avoids significant capital expenses, making it a viable solution for data centers of all sizes. Google’s Carbon-Intelligent Compute Management system is a prime example, using Virtual Capacity Curves to limit resources for flexible tasks based on day-ahead carbon forecasts. This system successfully defers workloads to periods with lower carbon intensity while ensuring task completion within 24 hours.

This method is scalable and adaptable, positioning it as a practical tool for large-scale operations and grid integration.

Scalability for Large Data Centers

Carbon-aware systems can scale across distributed facilities using hierarchical control frameworks. Global controllers manage workload distribution across multiple locations, directing tasks to regions with lower grid carbon intensity. Meanwhile, local controllers handle resource allocation and temporal adjustments within individual centers. This setup works efficiently across varying server loads, ensuring reliable performance while enabling facilities to participate in grid-responsive activities.

Environmental Impact Reduction

Beyond efficiency and scalability, carbon-aware scheduling reduces environmental impact by monitoring hardware "State-of-Health" metrics. This helps manage hardware degradation, which can increase energy consumption over time. By optimizing workload placement to extend hardware life – by approximately 1.6 years – these systems reduce the embodied carbon from manufacturing and replacements. Federated carbon intelligence approaches have shown cumulative CO₂ reductions of up to 45% over three years by balancing operational and embodied emissions. Additionally, load flexibility that reduces power by 25% for less than 1% of the year could unlock up to 100 GW of new data center capacity in the U.S., all without requiring new infrastructure for generation or transmission.

5. AI-Optimized Power Management

AI-optimized power management takes energy efficiency to the next level by aligning power usage with real-time demands. Using machine learning, these systems monitor individual server behavior and adjust power consumption dynamically, ensuring performance isn’t compromised. By targeting inefficiencies directly at the server level, this approach addresses energy waste in ways other methods often miss.

Energy Efficiency Improvements

Practical applications of AI-driven energy management have shown impressive results. For instance, in early 2023, World Wide Technology (WWT) tested QiO Technologies’ Foresight Optima DC+ AI software on Dell R650 and R750 servers. The software analyzed server power patterns and achieved power reductions of 19–23% for steady loads and 27–29% for variable workloads. This also lowered exhaust temperatures, reducing cooling demands. The project, led by Technical Solutions Architects Chris Braun and Jeff Gargac, demonstrated these gains without any hardware changes.

"As server usage has historically been managed conservatively to guarantee uptime and Service Level Agreements (SLAs), sleep states have not been utilized effectively. Exploiting this fact with a data-driven optimization approach allows for significant energy consumption savings to be achieved without impacting QoS." – Gary Chandler, CTO, QiO Technologies

By tailoring power adjustments to real workload needs rather than worst-case scenarios, AI power management complements other strategies like cooling and scheduling, creating a more efficient system overall.

Cost Reduction Potential

The financial benefits of AI power management are clear. By cutting electricity use, facilities can lower both operational costs and infrastructure expenses. For example, Microsoft Azure reduced its total energy consumption by 10% using machine learning for load forecasting and balancing. Similarly, Alibaba Cloud’s AI-powered battery and grid management saved 8% in energy costs and reduced carbon emissions by 5%. These software-based solutions are often more cost-effective than hardware upgrades or energy storage systems, making them accessible to a wide range of facilities.

AI also opens the door to demand response programs, which can provide utility credits and reduced tariffs. In a 2025 Phoenix trial, the Emerald Conductor platform cut cluster power usage by 25% over three hours during peak grid demand, all while maintaining Quality of Service. This was achieved by responding to utility signals from Salt River Project and Arizona Public Service, showcasing the potential for AI to make data centers more grid-friendly.

Scalability for Large Data Centers

AI power management is designed to scale seamlessly across distributed facilities. Platforms like Emerald Conductor use hierarchical control frameworks to coordinate workloads across multiple sites without requiring physical infrastructure changes. This flexibility is critical as global data center energy consumption is expected to hit 321 TWh by 2030, nearly 1.9% of global electricity use.

The system works by categorizing workloads based on their performance tolerance. For example, real-time inference tasks operate at full capacity (Flex 0), while large-scale model training can handle up to a 50% reduction in throughput (Flex 3). This tiered system allows facilities to adjust power usage during grid stress events without compromising service levels. Combined with tools like predictive analytics, dynamic cooling, and carbon-aware scheduling, AI-optimized power management forms a comprehensive energy-saving framework. Reinforcement learning agents further enhance efficiency by finding micro-optimizations tailored to each facility’s unique load patterns.

Environmental Impact Reduction

AI power management not only reduces energy consumption but also transforms data centers into active participants in renewable energy integration. By cutting power usage during times of high grid carbon intensity, these systems lower emissions and ease the strain on electrical infrastructure. The added benefit of reduced cooling needs amplifies these environmental gains across the entire facility.

"This demonstration marks a paradigm shift in the role of AI data centers–from static, high-load consumers to active, controllable grid participants." – Emerald AI Research Team

With load flexibility enabled by AI, data centers in the U.S. could unlock up to 100 GW of additional capacity by reducing power usage by 25% for less than 1% of the year – all without requiring new power plants or transmission lines. This shift not only supports sustainability goals but also ensures the grid remains resilient as demand continues to grow.

Wrapping It All Up

The five AI strategies – predictive analytics, real-time monitoring, dynamic cooling, carbon-aware scheduling, and AI-optimized power management – are reshaping data centers into highly efficient, grid-responsive facilities.

By tackling both IT and non-IT energy loads, which together can make up nearly 40% of a data center’s energy use, these approaches are proving their worth. Industry examples show that AI-driven methods can significantly cut cooling energy and total consumption. The result? Lower costs, reduced carbon footprints, and longer hardware lifespans.

The days of reactive energy management are behind us. Proactive, AI-powered solutions offer a scalable way to handle increasing compute demands without a matching rise in energy usage. The tools are already here, and every kilowatt-hour saved means less strain on budgets and the environment. This isn’t just about cost control – it’s about taking meaningful steps toward sustainability.

"Efficiency must be treated as a strategic enabler. IT and data center leaders should focus on embedding efficiency into procurement decisions." – AMD Data Center Insights

While transitioning to AI-optimized energy management requires dedication, the rewards go far beyond financial savings. It strengthens resilience, boosts ESG scores, and allows facilities to actively contribute to grid stability. As energy prices shift and sustainability regulations tighten, these five AI strategies offer a clear path to creating data centers that are both high-performing and eco-conscious.

At Serverion, we’re committed to this vision. Our hosting solutions are built to incorporate these AI strategies, ensuring not just operational efficiency but also a brighter, more sustainable future.

FAQs

How can predictive analytics help make data centers more energy-efficient?

Predictive analytics improves energy efficiency in data centers by leveraging advanced algorithms to forecast energy demand and optimize how systems operate. This approach enables operators to make precise adjustments to cooling systems, balance workloads, and minimize energy waste, often cutting power consumption by as much as 20%.

By staying ahead of thermal and operational challenges, predictive analytics doesn’t just reduce energy expenses – it also helps equipment last longer, creating a more dependable and efficient data center setup.

How does real-time monitoring improve energy efficiency in data centers?

Real-time monitoring is a game-changer for improving energy use in data centers. It provides a constant stream of insights into critical factors like temperature, humidity, and IT load. With this data, cooling and power systems can adjust dynamically to meet current demands, cutting down on energy waste while keeping everything running smoothly.

On top of that, real-time data enables AI-powered predictive analytics. This means data centers can anticipate workload shifts and tweak systems proactively. The result? Better energy efficiency and fewer risks of downtime, as potential issues or equipment problems can be spotted and addressed early. Simply put, real-time monitoring is key to running smarter, more efficient, and cost-effective data centers.

How does AI-powered energy management help data centers become more sustainable?

AI-powered energy management is transforming how data centers handle energy use, focusing on efficiency and reducing waste. Using advanced algorithms, AI can forecast energy demand, fine-tune cooling systems in real time, and boost overall operational efficiency. This approach helps cut down both power consumption and carbon emissions.

Beyond cost savings, these strategies align data centers with the push for greener energy solutions, contributing to a more sustainable future and supporting global environmental goals.