How Data Centers Go Green

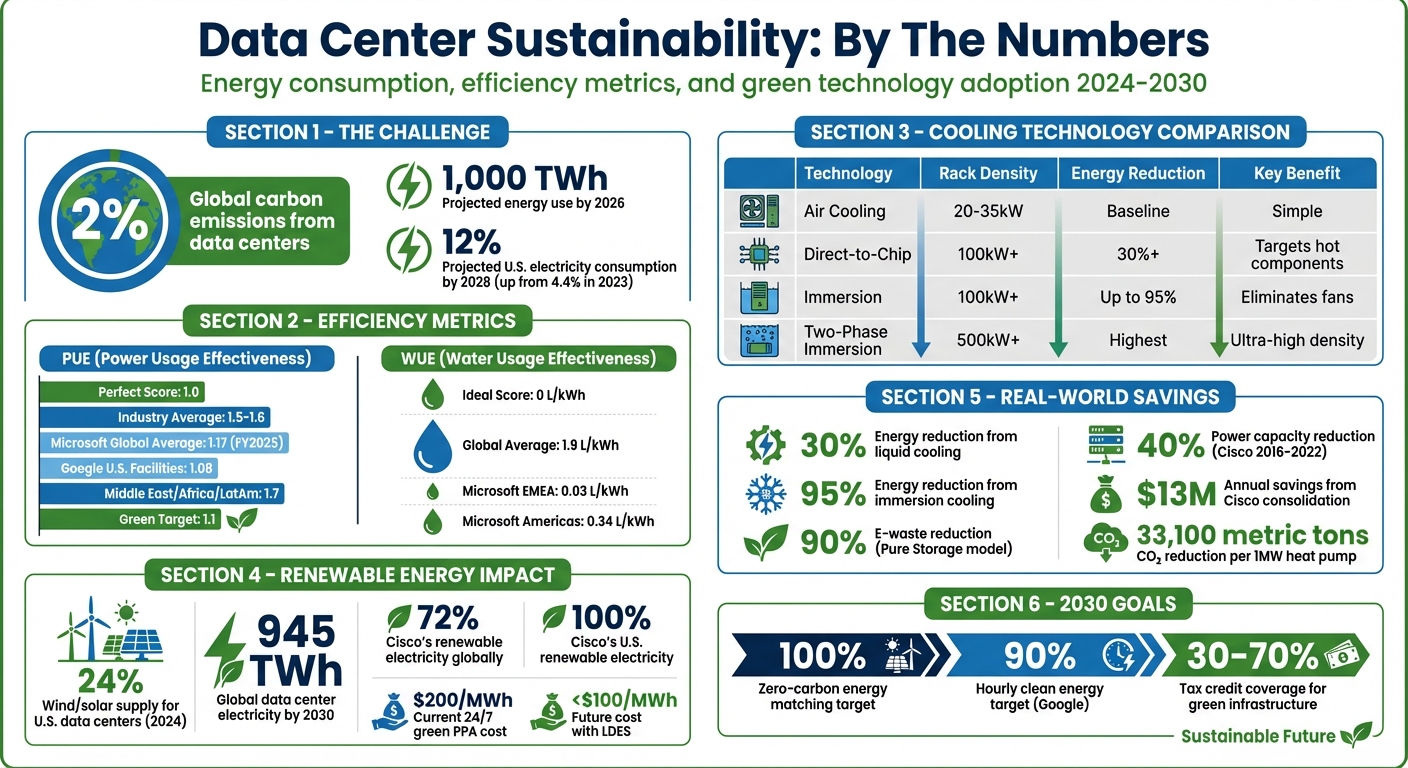

Data centers consume massive amounts of energy, contributing 2% of global carbon emissions. With demand rising due to AI and cloud computing, energy use could hit 1,000 TWh by 2026. Here’s how data centers are reducing their impact:

- Energy Efficiency: Metrics like PUE (Power Usage Effectiveness) and WUE (Water Usage Effectiveness) help track efficiency. Green centers aim for PUE near 1.0 and minimal water use.

- Renewable Energy: Solar, wind, and battery storage systems power operations while cutting reliance on fossil fuels.

- Advanced Cooling: Liquid cooling and free cooling reduce energy use by up to 30%, while seawater cooling eliminates freshwater needs.

- Waste Heat Recovery: Heat generated by IT equipment is reused for district heating or industrial processes.

- E-Waste Management: Recycling, refurbishing, and modular designs minimize electronic waste.

These shifts are driven by stricter regulations, corporate commitments, and financial incentives like tax credits. By adopting these practices, data centers are cutting costs, conserving resources, and meeting sustainability goals.

Data Center Sustainability Metrics and Impact Statistics 2024-2030

Inside Data Centers: Managing Energy Efficiency and Sustainability

sbb-itb-59e1987

Energy Efficiency Metrics and Standards

Green metrics like PUE and WUE are essential for measuring how efficiently data centers use resources, offering clear guidance for improving operations.

Understanding PUE and WUE

PUE (Power Usage Effectiveness) evaluates energy efficiency by comparing total facility energy to the energy used by IT equipment. A perfect PUE score of 1.0 means all energy is dedicated to computing, with no overhead for cooling, lighting, or power distribution. While most data centers operate with PUEs between 1.5 and 1.6, industry leaders like Microsoft reported an impressive global average of 1.17 in fiscal year 2025.

WUE (Water Usage Effectiveness) measures water consumption per kilowatt-hour of IT energy. The ideal WUE is 0, achievable only in facilities using air-cooling systems exclusively. On average, global WUE stands at 1.9 liters per kWh, but regional differences are stark. Microsoft’s fiscal year 2025 data highlights this variation: EMEA facilities achieved a WUE of just 0.03 L/kWh, while the Americas averaged 0.34 L/kWh.

These metrics highlight important trade-offs. For example, evaporative cooling can reduce PUE but increase water usage, while dry air cooling conserves water but demands more energy.

Global Benchmarks and 2030 Goals

Performance varies widely by region. For instance, the Middle East, Africa, and Latin America average a PUE of 1.7, while Google’s U.S. facilities have achieved an impressive 1.08. Despite these advancements, the global average PUE has remained mostly unchanged since 2018. This stagnation reflects the efficiency challenges of older enterprise facilities, which offset the gains made by newer hyperscale data centers.

"Average PUE levels remain mostly flat for the fifth consecutive year, but this obscures advances in newer, larger facilities." – Uptime Institute Global Data Center Survey 2024

Looking ahead to 2030, major providers are committed to matching 100% of their energy use with zero-carbon or renewable energy sources. This shift is vital since indirect water consumption – used by power plants to generate electricity – is estimated to be 12 times higher than the water used directly for cooling. For context, coal plants consume roughly 19,185 gallons per MWh, whereas solar and wind energy require almost no water.

These benchmarks underscore the need to rethink design strategies, a topic explored in the next section.

How Metrics Shape Design Decisions

Metrics like PUE and WUE directly influence how data centers are designed and operated. Operators must carefully balance these metrics, as focusing on one without considering the other can lead to unintended consequences. For instance, adopting ASHRAE A1 Allowable standards – which involve running facilities at slightly higher temperatures – can lower cooling energy demands while maintaining hardware reliability.

Emerging technologies are also reshaping efficiency strategies. Closed-loop and immersion cooling systems can cut freshwater consumption by up to 70%, though they may require more energy for air-cooled chillers. Similarly, using Direct Current (DC) configurations and bypassing Uninterruptible Power Supplies (UPS) can boost overall efficiency from 17.5% to 53.2% by reducing energy losses. However, fewer than 50% of operators currently track the advanced metrics necessary to meet upcoming sustainability regulations, leaving significant room for improvement.

These metrics are not just numbers – they drive innovations that will shape the future of sustainable data center operations, as detailed further in this article.

Renewable Energy Integration

Renewable energy plays a key role in cutting data center carbon emissions. As of 2024, wind and solar power supply about 24% of the electricity used by U.S. data centers. With global electricity use by data centers expected to reach 945 TWh by 2030, integrating renewables has become more than just an environmental initiative – it’s also a smart business move.

On-Site Renewable Energy Solutions

Installing solar panels and wind turbines directly at data center locations offers multiple benefits. These systems reduce energy losses from transmission, stabilize costs, and lessen reliance on utility grids that might still depend on fossil fuels.

Solar panels perform best during the day, while wind turbines often generate power in the evening or during winter months. Together, they ensure a steady supply of carbon-free energy. For example, Cisco’s Allen, Texas data center uses a 10 MW wind farm and rooftop solar panels, complemented by a rotary UPS system that avoids the environmental downsides of traditional lead-acid batteries. Similarly, Google operates a large-scale solar field at its St. Ghislain, Belgium data center, directly powering its operations.

A growing concept is the creation of "energy campuses" – facilities where renewable energy generation and data center infrastructure coexist. These setups allow centers to operate independently of traditional, often carbon-intensive, utility grids. Some operators reserve on-site renewables for non-IT uses, like powering lights and office spaces, while sourcing IT energy through other green methods. Cisco reports that 72% of its global data center electricity and 100% of its U.S. data center electricity comes from renewable sources, with 1.8 MW of on-site solar installed across its owned sites.

Pro tip: Assess the wind and solar potential of your site alongside the local transmission infrastructure. This helps identify the most cost-effective on-site energy solution. Combining solar and wind can also reduce the size – and cost – of battery storage needed.

On-site renewables lay the groundwork for energy storage systems to address the variability of renewable energy.

Battery Energy Storage Systems (BESS)

Since solar and wind energy production can be inconsistent, Battery Energy Storage Systems (BESS) are essential. These systems store surplus energy during peak production and release it when generation dips or demand spikes.

BESS makes renewable energy available on demand, which is critical for data centers that need uninterrupted power. Beyond serving as a backup, BESS also supports grid stability by regulating frequency and voltage, which is increasingly necessary as renewable energy becomes a larger part of the grid.

Operators use BESS for strategies like "peak shaving" (reducing peak-time energy use) and "load shifting" (using stored energy during expensive peak hours and recharging during cheaper, off-peak times). This flexibility can generate up to $0.58 per kVA load in daily revenue.

In Virginia, EVLO implemented a 300 MWh BESS to meet the energy demands of AI systems while supporting the state’s renewable energy goals. Meanwhile, the Humidor BESS Project in Los Angeles County, with 400 MW and 1,200 MWh of capacity, reduces reliance on gas-fired plants and generates $2 million annually in local tax revenue.

By smoothing out renewable energy inputs, BESS helps data centers move closer to near-zero carbon operations.

Key insight: BESS shouldn’t replace Uninterruptible Power Supplies (UPS). While UPS systems provide instant protection, BESS takes a few seconds to activate. Use both: UPS for immediate needs and BESS for longer-term energy support. Be sure to budget for maintenance and upgrades after about 10 years to maintain performance over the system’s 25–30 year lifespan.

Renewable Energy Procurement Strategies

For data centers that can’t generate enough on-site power, procurement strategies offer alternative solutions. Power Purchase Agreements (PPAs) and Renewable Energy Credits (RECs) are two common options.

PPAs allow operators to secure long-term, predictable energy costs – typically for 10–20 years – while directly funding new renewable energy projects. For instance, Google signed a 20-year PPA in 2010 for 114 MW of wind power from an Iowa farm to support its Council Bluffs data center. By February 2025, Amazon Web Services is set to remain the world’s largest corporate buyer of renewable energy, with over 100 solar and wind projects fueling its operations.

RECs, however, are primarily used for sustainability reporting and don’t typically offer cost savings. Companies relying heavily on RECs risk being accused of "greenwashing."

"Organizations risk charges of greenwashing if purchased renewable energy certificates are the main or only component of sustainability strategies." – Uptime Institute

The industry is now shifting toward 24/7 Carbon-Free Energy (CFE), which means matching every hour of energy use with local, carbon-free sources – not just offsetting annual totals. In early 2024, Google secured a 478 MW offshore wind PPA to power its Dutch data centers, aiming for 90% hourly clean energy through time-matched supply and storage. Microsoft has also tested 24/7 clean PPAs in Sweden, using hourly tracking to align energy demand with renewable supply.

Currently, a 24/7 green PPA using wind, solar, and lithium-ion systems costs over $200 per MWh in most areas. However, incorporating Long Duration Energy Storage (LDES) could drop costs below $100 per MWh. In the U.S., the federal Investment Tax Credit (ITC) offers a 30% tax credit for renewable energy projects, making these investments more attractive.

Next step: Diversify your renewable energy sources by combining wind and solar for a steadier supply. If you’re in a shared facility, ensure your contract clearly defines responsibilities for renewable energy procurement and REC ownership.

Advanced Cooling Technologies

Cooling systems can account for up to 40% of a data center’s total energy consumption. With AI workloads driving rack densities to unprecedented levels – expected to hit 50kW by 2027 – traditional air cooling methods are struggling to keep up. Air cooling is effective up to about 280W per chip, but new AI processors are on track to exceed 700W by 2025. Advanced cooling methods are stepping in to address these challenges, improving energy efficiency and supporting the evolving demands of AI-heavy data centers.

Liquid Cooling Systems

Liquid cooling is emerging as a powerful alternative to air cooling, largely due to water’s superior heat removal capabilities – about 2.7 times greater than air. This efficiency translates into significant energy savings, with liquid cooling cutting total data center energy use by at least 30% compared to air-based systems.

There are three main liquid cooling methods:

- Direct-to-Chip (DTC): Uses microchannel cold plates to cool specific components.

- Immersion Cooling: Submerges servers in a dielectric fluid for maximum heat dissipation.

- Rear Door Heat Exchangers (RDHx): Places liquid-filled coils on server racks to manage heat.

"Whatever liquid cooling technology is chosen, it will always be more efficient than air since the amount of energy required for forced convection with air will always be several times greater than that to move a liquid for the same amount of cooling." – Mohammad Azarifar, Auburn University

Immersion cooling, in particular, can slash energy use by up to 95% and reduce water consumption by 90%. Direct liquid cooling achieves impressive heat transfer rates of 25 W/cm²-K in water-based systems. Facilities adopting these technologies aim for Power Usage Effectiveness (PUE) as low as 1.1, compared to the global average of 1.55 in 2022.

Real-world examples are already showcasing these advancements. In late 2024, Start Campus’s SIN01 facility in Portugal began delivering 15MW of IT capacity using seawater-based cooling alongside liquid cooling technologies, supporting racks exceeding 100kW with a PUE target of 1.1. Similarly, Digital Realty’s La Courneuve hub in Paris, launched in 2023, incorporates direct liquid cooling to handle high-density AI workloads while reducing emissions.

Important note: Liquid-cooled racks don’t inherently manage humidity, so a separate system is necessary. Additionally, DTC systems still rely on air cooling for peripheral components, making them a partial rather than complete solution.

Free Cooling and Seawater Cooling

Free cooling methods complement liquid cooling by leveraging natural resources to reduce energy use. These systems use ambient air or water to bypass mechanical chillers, cutting energy consumption significantly. In fact, free cooling can be 20 times more energy-efficient than traditional methods, directly reducing carbon emissions.

Seawater cooling is particularly effective for coastal facilities. By using non-potable ocean water, these systems achieve a Water Usage Effectiveness (WUE) of 0, meaning they consume no freshwater. For instance, the SIN01 facility in Portugal uses Atlantic seawater to support scalable AI infrastructure. Similarly, Digital Realty’s Cloud House in London draws cooling water from the River Thames, returning the same volume it withdraws to maintain a sustainable cycle. In Singapore, Digital Realty’s SIN10 facility saves 1.24 million liters of water monthly by using DCI electrolysis to extend water life cycles and eliminate chemical treatments.

"Free air cooling can be one of a risk-adverse and energy-efficient solution for companies looking to minimize the carbon footprint of their data center deployments." – Kyle Chien, Sr. Director, Platform Innovation, Digital Realty

The success of free cooling depends heavily on local conditions. A detailed microclimate study is essential to determine whether temperature and humidity levels allow for effective deployment. In dry climates, evaporative cooling can cut energy use by up to 80%, offering another efficient option.

Cooling Solutions for High-Density Servers

AI and high-performance computing are pushing rack densities beyond 100kW, far exceeding the limits of air cooling, which caps out at 20–35kW. Two-phase immersion cooling is one solution for these extreme demands. It uses the latent heat from boiling and re-condensing dielectric fluid to manage tank power densities over 500kW.

However, two-phase systems face regulatory challenges, particularly around the use of polyfluoroalkyl substances (PFAS) in fluorinated cooling fluids. Single-phase immersion cooling offers a simpler alternative, though it lacks the advanced flow control of two-phase systems and is limited by the properties of dielectric liquids.

Life Cycle Assessments show that liquid cooling can significantly reduce energy demand, greenhouse gas emissions, and water consumption compared to air cooling. For data centers handling AI workloads, these benefits make liquid cooling a necessity.

The table below compares the key cooling technologies:

| Technology | Rack Density Limit | Energy Reduction | Primary Advantage |

|---|---|---|---|

| Air Cooling | 20-35kW | Baseline | Simple, widely available |

| Direct-to-Chip | 100kW+ | 30%+ | Targets hottest components |

| Immersion | 100kW+ | Up to 95% | Eliminates fans, compact design |

| Two-Phase Immersion | 500kW+ | Highest | Supports ultra-high densities |

Retrofitting tips: Transitioning to liquid cooling requires adjustments to floor layouts, rack configurations, and leak detection systems. A hybrid approach, combining air cooling with RDHx or DTC systems, can minimize the need for extensive facility upgrades.

Green Practices in Data Centers

Data centers are adopting principles of the circular economy to cut waste and recover resources. These efforts are turning facilities into community assets, reducing their environmental footprint while finding new ways to use what might otherwise be discarded.

Waste Heat Recovery

Data centers convert up to 90% of their IT energy into heat, much of which can be recovered. For example, in Germany, over 13 TWh of electricity per year is converted into heat, though most of it currently goes unused.

The heat generated by data centers typically ranges from 77°F to 104°F (25–40°C), which is considered low-grade. To make this heat useful for residential heating or industrial processes, facilities use high-temperature heat pumps (HTHPs) to raise water temperatures to 248°F (120°C). These pumps are highly efficient, transferring heat outputs that are 3 to 6 times greater than the electricity they consume.

Several projects highlight the potential of waste heat recovery:

- In 2022, Microsoft and Fortum developed a system in Finnish data centers to supply 40% of the heating needs for 250,000 residents.

- Equinix’s PA10 data center in Paris, launched in 2023, provides surplus heat free of charge for 15 years to the Plaine Saulnier urban development zone, including a swimming pool for the Paris Olympics.

- Facebook’s Odense, Denmark facility donates up to 100,000 MWh of waste energy annually to the city’s district heating system, benefiting residential heating and cutting emissions equivalent to removing 13,000 cars from the road each year.

Liquid cooling is making heat recovery even more effective. These systems generate higher-temperature waste heat compared to traditional air cooling. A 1 MW high-temperature heat pump can slash annual CO2 emissions by 33,100–33,200 metric tons, achieving an 85.4%–85.6% reduction compared to natural gas boilers.

"By embracing circular economy practices, data centers can transform from isolated entities into integrated community assets." – Scott Jarnagin, CEO, Caddis Cloud Solutions

Regulations are also driving change. The EU’s revised Energy Efficiency Directive (EED) now requires data centers with energy inputs of 1 MW or more to reuse their waste heat unless it’s technically or economically unfeasible. This mandate is speeding up adoption across Europe, and similar policies are emerging globally.

While waste heat is being repurposed, data centers are also addressing another major challenge: e-waste.

E-Waste Management

Frequent IT upgrades, typically every 3–5 years, produce considerable e-waste. Components often contain hazardous materials like lead, lithium, mercury, and cadmium, making proper disposal essential for environmental safety.

Some companies are leading the way in responsible e-waste management:

- Amazon Web Services (AWS) has diverted 14.6 million hardware components from landfills by recycling or selling them through its "Reverse Manufacturing" program.

- Pure Storage offers a "Storage-as-a-Service" model, allowing customers to upgrade components without replacing entire systems. This approach cuts energy use by up to 5X and reduces e-waste by at least 90%.

- Carrier/Sensitech’s Device Takeback Program has reclaimed 8.5 million temperature data instruments for reuse since 2021.

- Vertiv’s Trade-In Program ensures old Uninterruptible Power Supply (UPS) systems are safely disposed of or refurbished.

Specialized recycling partnerships recover valuable materials from outdated equipment while minimizing harm from toxic substances. Additionally, better cooling strategies extend the lifespan of IT hardware, reducing the need for frequent replacements.

Circular Economy Approaches

Beyond heat recovery and recycling, data centers are adopting broader circular economy strategies to maximize resource use. Modular designs allow for component-level upgrades instead of full replacements, cutting down on waste and lowering costs.

Data centers are also finding innovative ways to repurpose resources:

- Treated wastewater is being used for cooling systems.

- Waste heat is being utilized for on-site carbon capture or water purification.

A standout example is EcoDataCenter in Falun, Sweden, which integrates its waste heat into a neighboring industrial ecosystem. The heat is used by a nearby factory to dry wood pellets, creating a closed-loop energy system.

In the UK, Deep Green implemented a "digital boiler" at a public swimming pool in Exmouth in March 2023. The heat from a small-scale data center now keeps the pool warm, significantly reducing its reliance on gas.

"Extending the operational phase of IT equipment, through optimal cooling strategies and reusability of components reduces electronic waste and minimizes carbon footprint." – ABI Research

Switching from air cooling to liquid cooling technologies like cold plates can cut water use by 30% to 50% and reduce cooling-related power consumption by 20% to 30%. These systems not only improve energy efficiency but also produce higher-quality waste heat, making it easier to recover and reuse.

Together, these efforts demonstrate the potential for data centers to operate in a way that’s both efficient and environmentally responsible, aligning with the principles of green hosting.

Policy and Industry Initiatives

Governments and industry leaders are pushing for greener data centers through a mix of regulations and financial incentives.

Government Policies Driving Change

In the United States, data center development has been elevated to a national priority, with a strong focus on cleaner operations. In July 2025, President Donald J. Trump signed Executive Order 14318, aimed at speeding up federal permitting for data center infrastructure. This includes prioritizing high-voltage transmission and reliable baseload power.

"My Administration will pursue bold, large-scale industrial plans to vault the United States further into the lead on critical manufacturing processes and technologies… including artificial intelligence (AI) data centers and infrastructure that powers them." – Donald J. Trump, President of the United States

The Environmental Protection Agency (EPA) introduced the "Powering the Great American Comeback" initiative to streamline Clean Air Act reviews. This approach simplifies the environmental review process for backup and primary power sources. As EPA Administrator Lee Zeldin stated:

"Simplifying Clean Air Act reviews accelerates AI infrastructure development."

Singapore has taken a collaborative approach with its Green Data Centre Roadmap, developed alongside industry stakeholders. This roadmap aims to add 300 MW of new capacity while requiring facilities to achieve a Power Usage Effectiveness (PUE) of 1.3 or better within the next decade. In July 2023, Singapore provisionally awarded 80 MW of capacity to companies like AirTrunk-ByteDance, Equinix, GDS, and Microsoft, based on their adherence to top-tier energy efficiency standards and Green Mark DC Platinum Certification. An additional 200 MW has been reserved for operators using renewable energy sources.

These policies pave the way for financial incentives that significantly cut capital costs for green projects.

Financial Incentives for Green Transitions

In the U.S., federal tax credits play a big role in reducing the costs of green infrastructure. The Section 48E Clean Electricity Investment Tax Credit offers a base 30% credit for investments in zero-emission electricity facilities and energy storage systems. With bonuses for domestic content or projects in "energy communities" (areas affected by coal plant closures or brownfield sites), this credit can climb to 70%.

| Tax Credit | IRC Section | Base Benefit | Maximum Benefit | Eligible Technologies |

|---|---|---|---|---|

| Clean Electricity ITC | 48E | 30% | 70% | Zero-emission electricity facilities |

| Energy-Efficient Buildings | 179D | Up to $5+ per sq ft | Varies | HVAC, lighting, building envelope |

| Zero-Emission Nuclear Credit | 45U | 1.5 cents/kWh | N/A | Existing nuclear facilities |

| Carbon Oxide Sequestration | 45Q | $12–$85/ton | Varies | Natural gas with carbon capture (CCS) |

These incentives are driving major investments. For example, Microsoft entered a deal with Constellation Energy in September 2024 to reopen the Unit 2 nuclear reactor at Three Mile Island by 2028, leveraging tax breaks for nuclear power from the 2022 Inflation Reduction Act. Similarly, Amazon secured a contract with Talen Energy in June 2025 for 1,920 MW of carbon-free nuclear power through 2042, with plans to explore Small Modular Reactors (SMRs).

Singapore also offers direct grants, such as the Energy Efficiency Grant (EEG), which provides up to 70% co-funding for small and medium enterprises adopting energy-efficient IT equipment, capped at $30,000 per company. Additionally, the Water Efficiency Fund supports facilities installing recycling plants and optimizing cooling towers, particularly for data centers consuming at least 60,000 cubic meters of water annually.

As these financial incentives evolve, new energy trends are reshaping how data centers source power.

Future Trends and Recommendations

Nuclear energy is making a comeback, with companies securing 24/7 carbon-free baseload power. In June 2024, Google partnered with Fervo Energy and NV Energy to develop a 500 MW geothermal project in Utah, scalable to 2 GW. Similarly, Meta teamed up with Sage Geosystems in August 2024 to deliver 150 MW of geothermal power by 2027.

On-site power generation is also gaining traction as developers look to avoid grid connection delays. Some are exploring natural gas turbines equipped with future carbon capture capabilities, which qualify for the Section 45Q tax credit of $12 to $85 per ton of captured carbon.

Collaboration within the industry is crucial for progress. The Green Software Foundation emphasizes the importance of efficient programming to reduce carbon emissions. Chairman Sanjay Podder noted:

"Good software programming is something we have lost track of as lazy programmers in this new era of abundance."

Singapore’s Green Data Centre Roadmap is treated as a dynamic plan, evolving through collaboration with operators, end-users, suppliers, and academic institutions.

Data center operators are also encouraged to conduct cost segregation studies to reclassify building assets into shorter-lived categories, accelerating depreciation deductions. Additionally, they should keep an eye on deadlines – such as the accelerated termination of Section 179D deductions in June 2026 under the One Big Beautiful Bill Act – to maximize tax benefits. Early planning in site selection can offset 30% to 70% of capital costs for green infrastructure.

These emerging technologies, along with supportive policies and incentives, are driving the transition toward greener and more efficient data centers.

Conclusion

Key Takeaways

The move toward greener data centers isn’t just about reducing emissions – it’s also about cutting costs and staying competitive. Energy remains the biggest expense for data centers, with global consumption expected to surpass 1,000 TWh by 2026. By improving efficiency, operators can significantly lower their bills. Technologies like advanced cooling systems, renewable energy integration, and waste heat recovery are making a big difference. For instance, a data center in Beijing using transcritical CO₂ heat pumps reduced CO₂ emissions by 12,880 tons annually and trimmed investment costs by 10.2%. Similarly, Cisco’s global consolidation program between 2016 and 2022 slashed power capacity by 40%, saving $13 million annually.

Metrics like PUE (Power Usage Effectiveness), WUE (Water Usage Effectiveness), and CUE (Carbon Usage Effectiveness) are critical for tracking these improvements. With server rack densities climbing to 10-30 kW to handle AI workloads, traditional air cooling is becoming obsolete. Liquid cooling and waste heat recovery are now essential for high-density operations. Additionally, government incentives and policies are speeding up the adoption of eco-friendly practices across the industry.

Why Green Data Centers Matter for Hosting

For hosting providers, green data centers are more than an environmental choice – they’re a strategic advantage. Clients increasingly look for sustainable options, with certifications like LEED and Energy Star becoming key differentiators. Cloud computing alone could reduce the global IT carbon footprint by up to 38%. Modern servers also deliver more efficiency, supporting 312% more virtual machines per blade than in 2016 while cutting energy use per VM by 27%.

Reliability improves, too. Renewable energy combined with battery storage ensures more stable power, even during grid disruptions or extreme weather events. In 2025, 1 in 10 data center outages caused severe disruptions, highlighting the need for resilient infrastructure. Green data centers are also evolving into energy collaborators, feeding surplus renewable energy or repurposing waste heat into local grids, which strengthens their role in smart energy networks.

Looking Ahead

The future of hosting will increasingly favor sustainable infrastructure. By 2028, U.S. data centers could consume up to 12% of the nation’s electricity, compared to 4.4% in 2023. Meeting this demand responsibly requires immediate action. Hosting providers should seek green certifications, choose locations with renewable energy access, and adopt server virtualization to minimize hardware needs. Businesses looking for hosting solutions should evaluate providers’ sustainability efforts and explore hybrid models that balance on-premises needs with green cloud services. Circular practices, like refurbishing equipment and managing e-waste responsibly, will soon become standard as regulations tighten.

At Serverion (https://serverion.com), we’re dedicated to advancing these sustainable solutions, ensuring high-performance hosting that’s ready for the challenges ahead.

FAQs

What steps do data centers take to improve energy efficiency and achieve low PUE scores?

Data centers keep their Power Usage Effectiveness (PUE) scores low by adopting energy-smart technologies and practices. They rely on cutting-edge servers and hardware that are built to deliver top performance while using less power. To tackle the challenge of cooling, they use methods like liquid cooling, free cooling, or hot aisle/cold aisle containment, which help cut down on the energy needed to manage temperatures.

Beyond cooling, many data centers turn to renewable energy sources, efficient power distribution systems, and real-time monitoring tools to fine-tune energy use. By blending advanced cooling techniques, cleaner energy options, and streamlined operations, data centers not only improve their PUE but also lessen their overall environmental footprint.

How does renewable energy make data centers more sustainable?

Renewable energy plays a crucial role in helping data centers become more sustainable by cutting down their carbon emissions and reducing dependence on non-renewable energy sources. Incorporating energy solutions like solar power, wind energy, and hydrogen fuel cells allows data centers to significantly curb greenhouse gas emissions while contributing to global climate action.

Beyond the environmental advantages, renewable energy can also lead to lower operational costs and greater energy efficiency – an increasingly important factor as energy demands surge with the growth of AI and other resource-intensive technologies. Pairing renewable energy with advancements like waste heat recovery systems and smart energy management tools enables data centers to shrink their environmental footprint without compromising on performance or reliability.

This transition is a critical step toward building climate-neutral digital infrastructure and supporting a more sustainable future for everyone.

Why is liquid cooling crucial for modern data centers?

Liquid cooling is gaining traction in modern data centers as a smarter way to handle the heat produced by today’s high-performance hardware. This includes systems running artificial intelligence (AI) and other demanding applications. Unlike traditional air cooling, liquid cooling is far better at transferring heat, which helps cut down on energy usage and keeps operational costs in check.

With data centers increasingly relying on higher-density hardware and advanced technologies, liquid cooling not only boosts performance but also lessens the strain on resources. It supports higher operating temperatures while using less water and electricity, offering a more resource-conscious approach to maintaining the reliability and efficiency of critical systems.